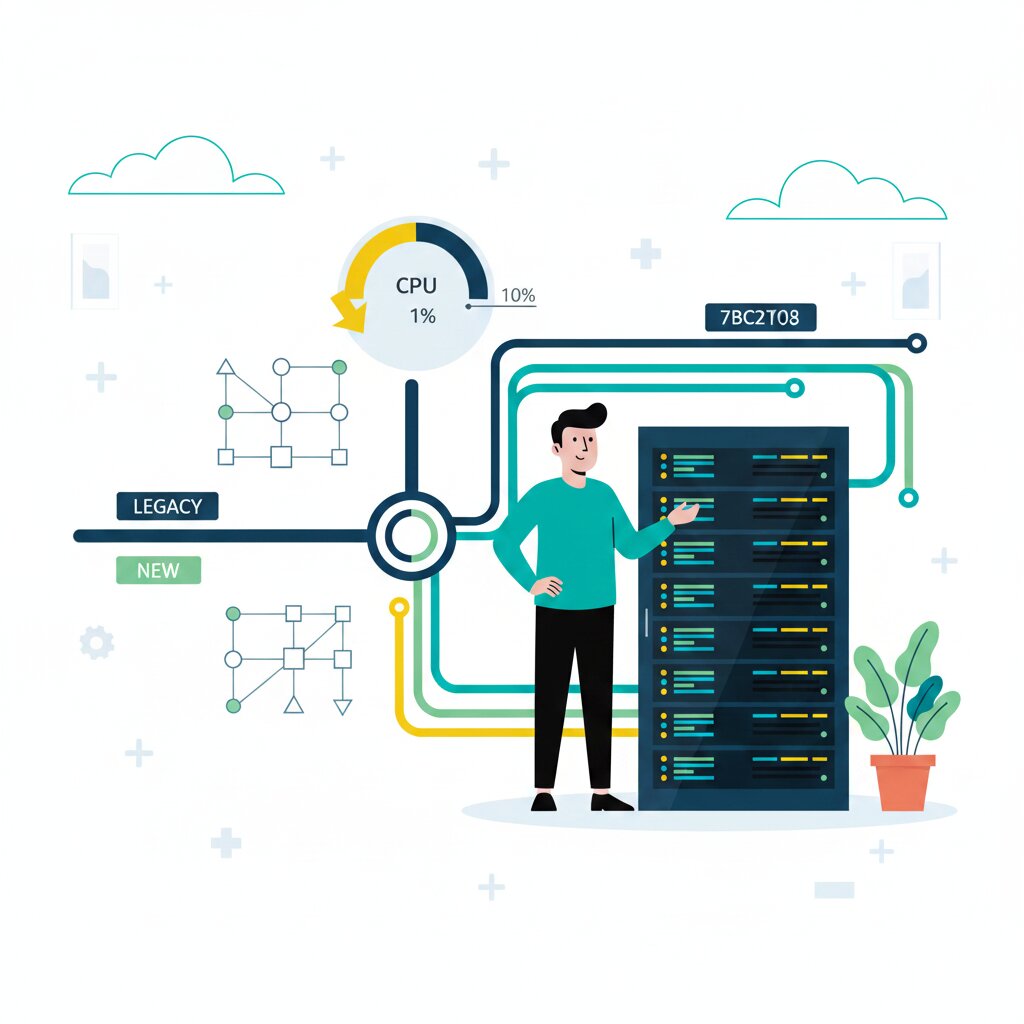

In April 2026, Airbnb’s observability engineering team published the details of a large-scale metrics pipeline migration. They moved from StatsD and an internal fork of Veneur to OpenTelemetry Protocol (OTLP), the OpenTelemetry Collector, and VictoriaMetrics’ vmagent. The new system ingests over 100 million samples per second in production, and CPU time spent on metrics processing in their JVM services dropped from 10% to under 1%.

That headline number is compelling. But the migration required solving specific correctness problems — counter resets, memory pressure, aggregation gaps — that only surfaced at scale. If you are evaluating a similar move, the enterprise OpenTelemetry migration playbook provides a broader decision framework.

What was Airbnb running before — and why did StatsD at 100 million samples per second stop working?

Airbnb’s legacy metrics pipeline ran on StatsD over UDP with an internal fork of Veneur handling aggregation before forwarding everything to a metrics vendor. StatsD is a push-based protocol where services fire UDP datagrams containing metric data. It is lightweight, widely adopted, and at moderate scale it works fine.

At 100 million samples per second, it stops working fine.

UDP provides no delivery guarantee and no backpressure. At high throughput, packets get silently dropped. You lose metrics and you do not know you have lost them. Veneur was an internal fork carrying a maintenance burden with no path to Prometheus compatibility without a significant rewrite.

The team also needed to support three coexisting instrumentation worlds. Existing StatsD libraries were deployed across the service fleet. OTLP adoption was growing. And a new Prometheus-compatible storage backend based on Grafana Mimir required cumulative counter semantics — fundamentally different from StatsD’s delta-based push model. The legacy stack could not bridge these.

How did Airbnb run two pipelines simultaneously without service disruption?

They used a dual-write pattern. Roughly 40% of Airbnb services use a shared, platform-maintained metrics library. The observability team updated this library to dual-emit — sending StatsD to the legacy pipeline while simultaneously emitting OTLP to the new OpenTelemetry Collector.

This approach avoids the coordination overhead of a big-bang cutover. No service team needed to schedule a migration window. No dashboards broke during the transition. Both pipelines ran in parallel, processing the same metrics, so the team could validate parity before cutting over.

The strategy was to front-load collection — get all metrics flowing into the new system first, then address dashboards, alerts, and user-facing tooling with real data already available. This worked because the shared library gave them a single point to make the change.

Airbnb is not the only team to take this approach. Shopify used the same dual-write pattern when they migrated their observability stack to Prometheus, Loki, Tempo, and Grafana. Their motivation was primarily financial — three separate vendors for metrics, logs, and traces had become expensive enough to justify rebuilding. The dual-write pattern let them validate the new stack against the old one before committing.

Switching to OTLP also gave Airbnb backend portability. Because OTLP decouples collection from storage, the move to Grafana Mimir was low-risk — the backend became replaceable without re-instrumenting services.

What is delta temporality and why did it fix JVM memory pressure?

After enabling OTLP across the fleet, Airbnb hit a problem with their highest-cardinality services — the ones emitting upward of 10,000 samples per second per instance. These services experienced memory pressure and increased garbage collection.

The root cause was the default metrics reporting mode: cumulative temporality. In cumulative mode, the OpenTelemetry SDK retains the full state of every metric-label combination between exports. If you have a service producing thousands of unique label combinations every second, memory consumption grows proportionally and stays there.

The fix was switching those specific services to delta temporality using AggregationTemporalitySelector.deltaPreferred(). In delta mode, each export contains only the change since the last export. The SDK does not need to hold the entire metric state in memory — it ships the diff and resets.

The trade-off is straightforward: if a service crashes unexpectedly under delta temporality, you get a visible data gap rather than an anomalous jump. For Airbnb’s high-cardinality services, that trade-off was worth it. Most services continued using cumulative mode — delta was only necessary for the extreme cases.

How did zero injection solve Prometheus counter resets in a high-cardinality pipeline?

This was the most technically challenging problem in the migration.

After completing the collection migration, the team noticed that PromQL queries over certain counters were consistently underreporting compared to the legacy vendor. The discrepancy was systematic, and it was hitting business-critical metrics.

The root cause sits in how Prometheus handles counters. In StatsD, each data point represents a delta relative to the flush window — the count since the last flush. In Prometheus, data points are cumulative, and the rate() function derives deltas by comparing consecutive samples. This works when counters increment steadily. But if a counter increments once and its pod restarts before it can increment again, that increment is lost before rate() can compute a meaningful delta.

At Airbnb, many counters track high-dimensional, low-frequency events — requests per currency per user per region, for example. Any given label combination might increment only a few times per day. These are exactly the counters where the restart-induced data loss hits hardest, and they are often the business-critical ones.

The team considered and rejected several fixes. Pre-initialising all counters to zero was impractical at scale with unpredictable label combinations. Replacing counters with gauges was counter-conventional in Prometheus. Pushing workaround PromQL onto every dashboard author would not scale.

The chosen solution was zero injection. When an aggregated counter is flushed for the first time, vmagent emits a synthetic zero instead of the actual running total. The real accumulated value is flushed on the subsequent interval. This anchors every counter to zero, matching Prometheus semantics, with only a single flush-interval lag on the first increment.

The fix lives in the aggregation tier as a configuration-level change — invisible to application teams, requiring no changes to instrumentation code. VictoriaMetrics’ core developer has noted that an alternative approach exists using MetricsQL’s increase_pure() function, but Airbnb’s solution works at the aggregation tier without requiring a specific query language.

Why was the OpenTelemetry Collector alone not enough for aggregation?

Airbnb’s previous pipeline aggregated away instance-level labels — pod, hostname — using Veneur before sending metrics to storage. The new Prometheus-based stack required the same aggregation step to keep storage costs manageable. Without it, every pod restart creates a new time series, and cardinality explodes.

The OpenTelemetry Collector could not do this. Despite open proposals, it lacked native metric aggregation support at the time of Airbnb’s migration. The team evaluated five alternatives:

- Continuing with Veneur would have required a rewrite for Prometheus compatibility.

- Recording rules require storing raw data in the TSDB before aggregation, defeating the cost-saving purpose.

- Vector lacked built-in horizontal scaling, and Rust adoption was limited at Airbnb.

- m3aggregator was architecturally more complex than necessary.

That left vmagent from VictoriaMetrics. It was selected for built-in streaming aggregation for Prometheus metrics, horizontal sharding support, approachable documentation, and a small codebase of roughly 10,000 lines that made internal customisation tractable. Several generic improvements were contributed back upstream.

The production architecture uses two layers of vmagent. Stateless router pods shard metrics by consistently hashing all labels except the ones to be aggregated away. Stateful aggregator pods maintain running totals in memory and perform the actual aggregation. Routers are configured with a static list of aggregator hostnames, leveraging Kubernetes StatefulSet stable network identities to avoid service discovery dependencies. The production cluster scaled to hundreds of aggregators.

What specifically produced the 10x CPU reduction?

The 10x figure comes from JVM profiling data. CPU time spent on metrics processing dropped from 10% to under 1% of total CPU samples after migrating from StatsD to OTLP.

Three factors drove the improvement. First, OTLP uses TCP with proper flow control instead of StatsD’s fire-and-forget UDP — no more packet loss, no more silent data gaps. Second, eliminating Veneur removed an entire processing layer from the pipeline. Third, vmagent’s streaming aggregation is more efficient than the legacy aggregation path.

OTLP also brought a structural benefit: native support for Prometheus exponential histograms, which removed the need for an intermediate translation layer within the Collector.

The overall cost of the pipeline was reduced by roughly an order of magnitude compared to the previous vendor-based architecture. The centralised aggregation tier also created an operational benefit worth noting — it became a general-purpose transformation layer. Operators can now drop problematic metrics caused by bad instrumentation changes without touching application code, or temporarily dual-emit raw metrics for debugging.

What can other teams take from Airbnb’s migration pattern?

The dual-write pattern is the most directly replicable element. If your organisation has a shared instrumentation library — or can create one — you can use the same approach to run legacy and new pipelines in parallel. It works at any scale, not just Airbnb’s.

The zero injection technique addresses a problem that any team migrating from a delta-based system to Prometheus will encounter with low-frequency counters. No other published source covers this fix in detail.

Airbnb is not alone in making this move. Shopify rebuilt their observability stack onto Prometheus and Grafana using dual-write. Flipkart solved a related aggregation problem at 80 million time-series using hierarchical Prometheus federation. The StatsD exit, the vendor cost reckoning, and the move toward a Prometheus-anchored open-source stack are a pattern playing out across large engineering organisations.

OTLP adoption delivers backend portability — by decoupling collection from storage, the backend becomes replaceable without re-instrumenting services. That is a strategic gain that compounds over time.

If you are evaluating whether this kind of migration makes sense for your team, the enterprise OpenTelemetry migration playbook walks through the full decision framework — from instrumentation audit through backend selection.

FAQ

What is delta temporality in OpenTelemetry?

Delta temporality is a metrics reporting mode where each export contains only the change since the last export, rather than the cumulative total. Configure it with AggregationTemporalitySelector.deltaPreferred() for services experiencing memory pressure from high-cardinality metrics.

How does the dual-write pattern work in an OpenTelemetry migration?

Instrumentation libraries are updated to simultaneously emit metrics to both the legacy pipeline and the new pipeline. Both process the same metrics in parallel, allowing teams to validate parity before cutting over.

What is zero injection and when do you need it?

Zero injection is a technique where the aggregation layer emits a synthetic zero the first time a counter is flushed, anchoring the cumulative baseline for Prometheus. You need it when migrating from delta-based systems to Prometheus with low-frequency, high-dimensionality counters.

Why did Airbnb choose vmagent over the OpenTelemetry Collector for aggregation?

The OpenTelemetry Collector lacked native metric aggregation support. vmagent provided built-in streaming aggregation, horizontal sharding, and a small codebase that made internal customisation feasible.

What is the difference between StatsD and OTLP for metrics?

StatsD uses UDP datagrams — fire-and-forget with no delivery guarantee. OTLP uses TCP with proper flow control for reliable delivery, and natively supports Prometheus data types like exponential histograms.

How does Airbnb’s vmagent topology handle horizontal scaling?

Stateless router pods hash metrics by all labels except those targeted for aggregation, then forward to stateful aggregator pods using Kubernetes StatefulSet stable network identities for consistent sharding.

Is the dual-write migration pattern specific to Airbnb?

No. Shopify used the same pattern in their observability migration. Flipkart tackled a related problem using hierarchical Prometheus federation. The approach is replicable for any organisation with a shared instrumentation library.

What is PromQL rate() and why does it cause counter undercounting?

PromQL’s rate() derives deltas from cumulative counters. If a counter increments and its pod restarts before another increment, that data is lost. This systematically undercounts low-frequency, high-dimensionality metrics.

How much did Airbnb’s migration reduce infrastructure costs?

Overall cost was reduced by roughly an order of magnitude compared to the previous vendor-based architecture. The 10x CPU reduction specifically refers to JVM metrics processing CPU dropping from 10% to under 1%.

Did Airbnb use Grafana Mimir as part of the migration?

Grafana Mimir is the storage backend, not part of the migration mechanism. The migration switched from StatsD/Veneur to OTLP/OpenTelemetry Collector/vmagent. Mimir is where aggregated metrics land.