Agile is how software is built. Its conceptualisation, its practices, its strategies have permeated software development, even in teams who are not Agile practitioners.

The determination of Agile was to keep the developers of software aligned with the users of the software. Alignment was maintained through feedback loops, sync points with the stakeholders, and the feedback loops were short: software was built incrementally and iteratively, and those increments were kept small so iteration could happen quickly and developers and stakeholders could never drift too far out of sync. This is what the Agile product stories and sprints grew out of, and technical practices like CI/CD developed to support them.

Now AI has broken Agile. Coding assistants and agents have changed the flow of software development: where time is spent, where costs are generated, where the sync points are.

We’re going to take a quick look at what AI is doing to Agile and what can be done to get the best of both tools. Rather than swapping between AI assistant, AI agent, coding agent, etc, we’re just going to call it AI.

How AI breaks Agile

Getting humans to iterate on code is expensive, so you want to get it right. You want your developers building the right pieces. This is why user stories, sprint planning, standups, ticket grooming and so on exist. It’s all done to reduce risk; the risk that your developers just spent weeks on the wrong code.

Getting AI to iterate on code is cheap and fast compared to getting humans to do it. It becomes the cheapest and fastest part of the process. A two week sprint can be completed in an hour or two. This changes project cadence, and project scheduling, and messes with the stakeholder sync points.

Do you have meetings every few hours to discuss the new feature implementation? Do you discuss forty new features at the next stakeholder meeting? What do you cover in your stand-ups?

AI shifts effort from coding to reviewing. Except the review schedule has been decoupled from human effort and timing. It is quite easy to instruct a few agents that go on to generate a constant flow of PRs, with each PR encompassing thousands of lines of changes across hundreds of files.

Review fatigue is real and results in developers skimming a fraction of the changes in a PR before accepting it. And they are going to accept it because they have been accepting PRs for weeks and their knowledge of the codebase is stale and getting back up to speed plus doing a proper review of the PR would take just as long as implementing the changes themselves.

The consequence of review fatigue is technical debt. Without pushback on its output, AI accumulates poorly architected code on top of poorly architected code. Eventually, an error occurs that overwhelms the context and the understanding of the AI. Slop can’t fix slop, as they say. And developers need to go back in and spend a schedule-breaking amount of time and effort to understand the codebase and implement fixes manually.

Making AI work with Agile

You can make AI work with Agile. You can tweak the methodology, you can use adopt new tools, and apply some old-fashioned discipline.

The first step in tweaking the methodology is to rethink your sync points with stakeholders. What should the unit of work look like? When does it make sense to meet and review progress?

And your sync points will depend on how you define when a unit of work is done. What does done look like when AI is generating your code?

Done should be when all tests pass and all metrics are met. All the tests and all the metrics. Because AI is trained to pass tests to the exclusion of all else (that is Reinforcement Learning in a nutshell – learning to pass tests), it can’t be trusted in how it passes tests. It will take shortcuts, it will try to shift the bar it is supposed to be clearing. This means that for every metric you need a secondary metric that detects cheating.

Your code test coverage needs to be paired with mutation testing. Performance benchmarks need to be paired with realistic data fixtures so you’re not surprised when customers start hammering your product. Find a counter-test for every test.

And once the tests pass and the metrics are met, then you still need to have humans do the review. This will be the cap on the unit of work and the limit on your throughput. It is an old fashioned “stitch in time saves nine” solution and one that should be bypassed only very grudgingly and only by deep testing and constant review (see, it’s inescapable) of the tools and processes you replace it with. And let’s admit it, the tools and processes you replace humans reviewing AI code with will always be AI reviewers of AI code.

Finally, consider what you’re going to cover in your daily scrums. Instead of individual status updates you want to be covering what reviewing needs to be done, what testing strategies are being put in place, what holes are showing up in your process that need patching.

This is a shift from building the product to managing the building of the product. And that shift is for everyone. Your developers will still write code, just much less. They will be managers of agents and monitors and arbiters of their agents’ outputs. AI flattens the workflow to two steps: Design → QA.

There is no conclusion to this

This is just a single step forward in what is a time of rapid constant change. While it does appear that AI abilities are plateauing, the software development industry is still evolving rapidly in its use of AI.

What is clear is that AI is not a super genius. It’s decisions can’t be relied on and its code cannot be trusted and we must verify, verify, verify. But sometimes we can use tools to handle that verification, and sometimes we can even use more AI.

And this is impacting where software developers spend their time, and where the bottlenecks are in building software products. It is an ongoing challenge to find the new best practices for the Agile development of software in the age of AI. We hope this gives you some ideas on where you can look to improve and optimise your practices.

The AI Job Replacement Calculator

(See Hardware for details)

| Rack | GB200 NVL72 | 36 Grace CPUs + 72 Blackwell GPUs, liquid-cooled |

| GPUs per rack | 72 × B200 | |

| Serving nodes | 9 × 8-GPU | 8-GPU tensor-parallel group |

| IT power | 120 kW | 115 kW liquid + 5 kW air |

| PUE | 1.2 | Modern liquid-cooled facility |

| Facility power | 144 kW | 120 × 1.2 |

| Racks per GW | 6,944 | 1,000,000 ÷ 144 |

- Token speeds — API-measured from Artificial Analysis (April 2026). May differ from bare-metal GB200 throughput.

- Kimi K2.6: 154.6 tok/s — AA (Clarifai). 1T/32B MoE

- Gemini 3.1 Pro: 112.2 tok/s — AA (Google API)

- GPT-5.5: 81.9 tok/s — AA (OpenAI, high)

- Claude Sonnet 4: 67.6 tok/s — AA (Anthropic API)

- Claude Opus 4: 47.7 tok/s — AA (Anthropic API)

- DeepSeek V4 Pro: 38.4 tok/s — AA (DeepSeek API)

- GB200 NVL72 — NVIDIA, The Register, DGX docs

- AI buildout — BloombergNEF: 23.1 GW under construction globally (Sept 2025). US: 15.9 GW, EMEA: 2.9 GW, APAC: 3.2 GW

- Buildout estimates — JLL: 103 GW → 200 GW by 2030 (~19 GW/yr). IEA: ~18–20 GW/yr implied. Sightline Climate: ~36% slippage rate → 10–15 GW/yr actual completions

- 300M jobs at risk — Goldman Sachs (2023): generative AI could expose ~300M full-time jobs globally to automation

It might be actual industry interest, or it might be the buying power of two corporate juggernauts’ marketing machines, but the new Starbucks app within OpenAI’s ChatGPT that let’s you create a coffee based on “vibes” is generating a lot of media.

For you, the interest is that the Starbuck’s app is an MCP App that’s letting their customers access their products from inside the UI that more and more people are spending more and more time in. When in Rome…

We’re going to give you a quick overview of MCP Apps and help you decide if you should build one.

What are MCP Apps and where did they come from

MCP is Model Context Protocol. It’s a standard announced, and open sourced, by Anthropic in November 2024 that provides a way to give AI access to APIs. It provides detailed description of what API endpoints do and expected data and return types. This is enough information for AI agents to understand how to request data, and the necessary information for the agent harness (ChatGPT, Claude Code, Cursor, Copilot, etc) to pass the data back and forth between the API and the agent.

MCP was an instant hit. Now you could connect AI to anything – databases, Stripe payments, coffee machines… Best practices in security and management followed and with the addition of proper authentication and permissioning, it has been embraced by enterprise. It provides the perfect interface for giving employees secure and monitored access to inhouse resources through the ubiquitous ChatGPT/Claude clients.

In October 2025 OpenAI announced their OpenAI Apps SDK. It was MCP with added on UI. That UI is built and rendered like ordinary web content, and so can provide users with any kind of interface and feature you’d like.

In November 2025, the App extension to MCP was launched as a provisional addition to the standard. This wasn’t a competing standard. It was heavily aligned with OpenAI’s Apps SDK, and in January this year it became a part of the Model Context Protocol.

OpenAI’s App SDK still has its own proprietary elements. OpenAI App SDK let’s your MCP App access local files, trigger checkout flows and use a bunch of other ChatGPT specific APIs.

It is interesting that despite OpenAI’s App SDK providing checkout flows, Starbuck’s MCP App opens their own website (or app if on a phone) to actually complete the order. This might be to avoid the inevitable fees going through OpenAI’s payment gateway incurs. We can only wonder if this option will remain available or if they will pull an Apple require all payments to go through their gateway so they can take their cut.

How MCP Apps work in a nutshell

MCP Apps rely on the harness. Whether it is ChatGPT, Claude, Claude Code, Copilot, etc, etc. The harness is the middleman between everyone:

User ↔ Harness ↔ Agent

MCP App widget ↔ Harness ↔ Agent

MCP App widget ↔ Harness ↔ User

MCP server ↔ Harness ↔ Agent

MCP server ↔ Harness ↔ Widget

This is to ensure that MCP Apps are safe to use. The UI itself runs in a sandboxed iframe. It can only talk to the outside world, including the server that delivered it to the harness, via the harness. If it wants to load data, it calls a function in the harness. If it wants to provide the agent with fresh information for its context, it calls a function in the harness.

Microsoft has some good examples of MCP Apps with complex UIs that allow the user to navigate information manually, while also asking the agent to take actions on the data.

The user can explore with the UI. The UI can send updates to the agent. The user can ask the agent to take actions based on what is happening in the UI. And if the MCP App provides the right tools, the agent can carry out actions that are reflected in the UI.

What an MCP App can do is limited by what tools (ie API access points) and data you expose to them.

Do you need to build an MCP App?

That depends on your users and your product. Are your users heavy users of AI? Have you checked?

Is your product part of a broader process or workflow? Is it a “mission control” style system?

Does your product generate data of any kind that needs to be communicated outside of your product? Do you already have some export functionality?

Then your users might appreciate an MCP App.

How to build an MVP MCP App yourself

The MCP group have all the documentation you need to build an MCP App. And they recommend you start by installing a skill in your AI coding agent and asking it to build it for you.

Of course, you need to give some thought and planning to authentication and permissions and payments and all the other complexities that make a product viable on top of how you are going to implement your UI.

You probably already have a UI. Can you retarget it to the MCP App interface easily?

Welcome to the next and possibly last API

One of the major shifts in building web-based products was the separation of apps into APIs and UIs. It meant your backend could drive an Android App as well as a website. Or an iPhone App. Or an AppleTV app.

MCP Apps are the next target for your APIs. And given the way AI is eating software the way software was supposed to eat the world, it might be the last target. At least the last target you need to write yourself.

Open Source Exploits And How To Protect Your Codebase From ThemOpen source software has become the foundation that the Internet and contemporary software – from phone apps to SaaS – is built on. It’s a vast library of code created and maintained, mostly, by volunteers. It’s the world of software development’s greatest asset, and it is becoming its greatest risk.

Popular open source libraries can be used in millions of projects, even without the project owner’s knowledge. Open source libraries are built on open source libraries, which are often built on open source libraries. There’s a chain of dependency, and if any link is compromised, the attackers win.

What the attackers win is generally access to cryptocurrency, if you have any. It seems to be the motivating factor for lots of exploits, going by the payloads they install. But stealing credentials and taking over accounts to enable ransom bids on businesses is also on the cards.

We’re going to look at the two most recent open source exploits and how they were accomplished. Then we’ll give you the basic advice for staying safe while still being able to participate in and reap the advantages of open source software.

Axios – don’t underestimate attackers’ resourcefulness

Axios is one of those foundational libraries that everyone uses because it simplifies common operations. Axios makes pulling data into browsers from servers more pleasant. It is part of the Node ecosystem, which means its part of the modern Javascript/React/SaaS world. It’s used everywhere.

This exploit was performed through social engineering. The attackers cloned a real company – including deep fakes of individuals for video calls and a Slack workspace with channels containing chatter and links to LinkedIn posts. On March 30 this year they invited the maintainer in, then started a video call. The video call webpage announced it needed an update installed. And the maintainer allowed the update to run.

But it wasn’t an update. It was a RAT – a Remote Access Trojan. It grabbed his credentials and updated the Axios repository to include a new dependency – a third party library the attackers had developed and uploaded to the npmjs package repository, where every client of the Axios library would be able to download it.

The attackers’ library ran a script that downloaded another RAT to every machine that updated their Axios installation. This gave the attackers remote access and total control over those machines.

LiteLLM – popularity leads to massive side effects

LiteLLM’s exploit was the result of an earlier successful exploit against Trivy, ironically a security scanner.

LiteLLM is a Python package rather than a Node package, and credentials accessed as a result of the Trivy compromise allowed attackers to access LiteLLM’s software publishing pipeline. This allowed them to add a credential stealer to the codebase on March 24 that would launch every time the library was accessed. The credential stealer grabbed everything from remote machine logins to cryptowallets.

LiteLLM makes it easy to connect to hundreds of AI models and providers. It is downloaded 3.4 million times a day (note – the bulk of these are automated downloads as part of testing and building software that uses LiteLLM). During the 46 minute period the exploit was live there were 46,996 downloads of the compromised software.

The exploit was found because it had a bug that resulted in any machine that downloaded it grinding to a halt within seconds. But there were still real consequences:

“AI hiring startup Mercor confirmed it was ‘one of thousands of companies’ affected by the LiteLLM supply-chain attack as the fallout from the Trivy compromise continues to spread. … The company’s admission follows claims by extortion crew Lapsus$ … that it stole 4 TB, including 939 GB of Mercor source code, plus other data, from the AI recruiting firm, and offered to sell the purloined files to the highest bidder.” — The Register, 2026-04-02

Strategies for staying safe while using open source libraries

While there are bad elements out there working to take advantage of open source, they are outnumbered by the people working to make open source safer. The open source world is going through a transition period at the moment, mostly driven by AI changing what can be accomplished with software and how fast. But this works just as well for defense as it does for attack. Scanning for exploits and more secure practices for package sites is coming online.

In the meantime, while dedicated teams are working at detecting and blocking exploits as quickly as possible,there are basic steps you can take that will greatly reduce your exposure.

- Add a mandatory “cooldown” period before new releases can be downloaded.Most exploits are found within minutes or hours. Waiting 3 days after a new release will give the security infrastructure time to discover any issues. Many package managers (like uv and pnpm) allow you to set that as a config option.

- Pin third party libraries by exact version (or commit SHA), and commit your lockfile. Don’t let your project simply move forward to the latest release.

- If the library is stable consider moving it from being an external dependency to an internal dependency by integrating it into your codebase. This is a move that coding agents make easier. If the “stable” library ever does see substantial changes, adding them to your codebase can be as simple as prompting your coding agent to do the work.

- Disable lifecycle/post-install scripts in your Continuous Integration. The Axios exploit relied on the library’s post-install script to do its work.

We’re living in interesting software times

Software has never been easier to bring into existence. Sadly, this includes exploits as well as beneficial tools. Open source software is and will remain an essential resource for every software developer out there. This is why individuals and organisations are pouring resources and ingenuity into keeping it secure and safe, and they have the numbers on their side.

Even with the speed and power of AI coding agents no-one can expect to build and maintain every piece of the software stack their business relies on. But by moving a little bit slower and setting some sensible defaults for how third party software is incorporated into your codebase, you can reduce your risk to the minimum while still getting all the benefits participating in the open source ecosystem brings.

The Three Trends Shaping the Future of AIWhen you look at how the frontier AI companies are talking and operating, the future looks like it will be filled with giant data centres within which all jobs are performed. It doesn’t appear to leave much for the rest of us.

Looking beyond OpenAI and Anthropic talking up their future prospects, there are some trends in software, hardware and AI that are bootstrapping off each other and pointing to a different direction things might go.

We’re going to look at these trends and show how they fit together and what it might mean, starting with software.

From Claude Code to Clawed Code

In May 2025 Claude Code became generally available. This agentic coding tool was one of the first tools to clearly demonstrate how capable models had become. Using the chat interface within a terminal (and the code open in an IDE), a developer could tell Claude to make some changes and then sit back and watch as the agent performed all the steps to find and read files, make edits and run tests. It might even do an online search if it needed more information.

The uptake of Claude Code was rapid, and subscriptions to it drove up Anthropic’s revenues. A whole new product category had been born. And it was a category that people were happy to pay for – unlike AI chat. Other model providers – Google, OpenAI – took notice and launched their own versions.

Anthropic continued to refine their models, training them to perform more consistently and diligently within the Claude Code “harness” and across the kinds of tasks developers needed completed.

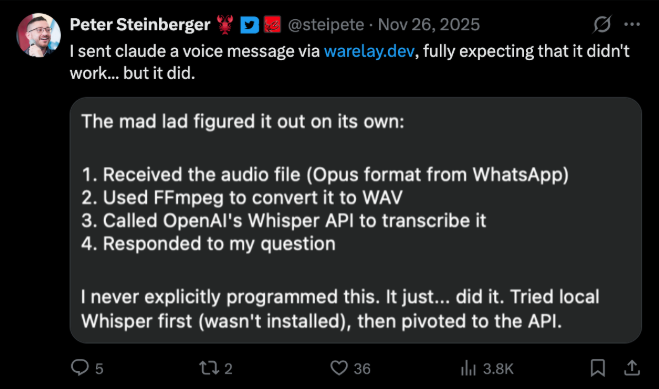

In October of the same year Peter Steinberger, was working on a tool to connect Claude Code to a WhatsApp chat so he could keep working from his phone when he was away from his computer. It was simple middleman that ran commands from a WhatsApp chat in Claude Code and passed the results back to the chat.

Steinberger “discovered” that the Claude Code instance running back at home on his computer could use any command line tools it had available, and would even download and install tools if it needed them.

All the training Anthropic had performed to improve the coding workflow ability of the model had made it usable as a general agent.

Steinberger went on to build tools so Claude Code could access Google services, including mail and calendars, and the first “ClawdBot” was born. Three months later it was the fastest growing open source project in history, renamed to OpenClaw to avoid litigation, and Steinberger was hired by OpenAI.

Another new product category had been stumbled upon. And this one was not limited to developers. Ignoring all the security issues, people were using OpenClaw to run their businesses. People were installing OpenClaw for their parents. In China tech firms were having OpenClaw days where they helped consumers install it and get it connected to their services. OpenClaw as user lock-in.

Agents were no longer just for coders, they were for anyone. Agents were becoming the universal voice/chat interface to the complexities and drudgeries of the services everyone is forced to use.

Smarter, faster, smaller

Once a model is released, the providers continue to train them to improve performance. Training data is cumulative. The more training data you can collect over time (especially via free products where training on interactions is the price of using it) the more high quality data you can accumulate. Model providers have been collecting data from millions of users for a few years now.

At the same time, optimisation of model training is a global research focus in the field. As the data curation improves so do the techniques to make use of that data. The effects of this can be seen in the leap of ability in Claude Opus in late November 2025 and OpenAI’s GPT-5.2 a few weeks later. Developers reported both as the latest step change in model ability.

Post-training techniques and data curation are also benefitting open source models. These models are now only 3 to 6 months behind frontier models in performance and the intelligence gap between them has dropped to 5-7% according to the Artificial Analysis Intelligence Index.

Optimised training and curated data is resulting in small models that can be run on local hardware, like the Qwen3.5 9 Billion parameter model (released March 2026) that can beat GPT-4 on instruction following and reasoning.

The GPT-4-0613 model, though official numbers have never been released, was estimated to be a 1.7 Trillion parameter MOE model with 220 Billion parameters active at any one time. 200x larger than Qwen3.5 9B.

Qwen3.5 also comes in 27B, 35B-A3B, 122B-A10B, 397B-A17B sizes, as well as smaller 0.8B, 2B, 4B parameter versions.

The 27B model is smarter than the MOE 35B-A3B, but the MOE model, due to having only 3 billion parameters active at a time, can be used in lower memory environments and respond faster.

There has also been a shift towards 4 bit parameters, both on the training side (via Nvidia NVPF4) and on the local model side (eg Unsloth’s Qwen3.5 4 bit quants). Normally model parameters are a 16 bit floating point number (eg 0.29847). This means a 9 billion parameter model would need 18 gigabytes (16 bits = 2 bytes, 2 * 9B = 18B) just to be loaded into memory. Quantised to 4 bits in a way that minimises loss of quality, it can be loaded into 4GB of RAM.

The take away is that there exists smart, useful models that can match the resources available for compute.

Building brains for AI

In 2017 Apple announced the A11 Bionic chip for the new iPhoneX. It was a system-on-a-chip (SoC) that featured integrated CPU, memory, GPU, and a Neural Processing Unit (NPU). The NPU was used to power Face ID, computation photography (needed by the tiny camera), speech recognition and more.

Apple’s drive to bring more compute to the power-constrained environments of the iPhone and iPad translated nicely to their volume-constrained and slightly less power-constrained laptops. In November 2020 they launched the M1 – a laptop SoC that set new benchmarks in speed and efficiency, but was still just a higher-powered version of the chip powering the iPhone.

But in building a SoC that could support the iPhone’s best-in-class features, Apple ended up with an architecture that looks like it was designed for the AI boom. The memory tightly integrated with the processor instead of connected via copper traces running across circuit boards, the GPU and NPU cores – it all made running local AI models fast and efficient.

And because the memory architecture did not separate main memory from the memory available to the GPU and NPU, it meant M-series chips could run any model that could fit into the total memory available – up to 512GB on M3 Ultra chips.

Videos of developers running trillion parameter models on Apple hardware (Kimi K2.5 on M3 Ultra Mac Studios) made developers on PCs with 16 GB GPU cards take notice. So did CPU manufacturers. They have all started bringing out their own SoCs targeting local AI:

- AMD Ryzen AI Max

- Intel Lunar Lake

- NVIDIA DGX Spark

- Qualcomm Snapdragon X Elite

- MediaTek Kompanio Ultra

This move from designing and building general purpose CPUs to AI-optimised SoCs is a seismic shift in the marketplace. It’s bigger than PCs arriving with graphics capabilities or WiFi.

Nvidia is still a great believer in the RTX AI PC, which is simply the current PC+GPU set up. These are step up in performance but come at a price, and that price currently limits the size of models that can be run on them.

Where these trends might lead

Over the next few years, between hardware improvements and model intelligence increasing, most people’s needs for any kind of AI agent will be able to be met by a model running locally on their own machine.

Most people don’t build front ends for websites. They don’t need frontier coding models. And the people who do build front ends for websites: they have all the same administrative, scheduling and service navigation needs everyone else does.

Agents, the product that OpenAI, Anthropic and Nvidia want to sell to enterprise and everyone else; and what we expect is the start of general AI usage spreading out into the world, will not be able to be kept locked behind a paywall.

While the giant data centres look like a bid to corner the supply of compute, and thus control the price of access to AI (not at all helped by Sam Altman stockpiling RAM), trends in small local model intelligence and improvements in the hardware they run on suggest choice and control might remain in the hands of individual users and businesses.

Is the Future of Software Pay-to-Win?You can trace the birth of the agentic coding hype machine back to the “ralph loop” in mid-2025, but it really took off in November with the release of Anthropic’s Opus 4.5, followed by OpenAI’s release of GPT-5.2 two weeks later.

Developers started speaking of a qualitative difference in the models, an increase in intelligence and agency. Anthropic published articles about using Opus to write a compiler. Harness engineering replaced context engineering (which had replaced prompt engineering) as the new focus for getting the best performance out of coding agents.

People started talking about software factories, where agents churned away tirelessly implementing specs, and these specs were the new programming language: a step up in abstraction from coding, where you told the agent what to build and the agents turned your intentions into code.

But it appears that turning an 800 line document into 25,000 lines of code is a lot easier than turning 25,000 lines of code into a reliable, working application.

Developers, and companies, are reporting hitting the limits of agents and returning to a more hands-on approach, but still supported by agents. Research is showing agents can’t be trusted to maintain codebases and then there is the nature of the data agents are trained on.

Yet the software factory still has its proponents. Is this difference in approach a skill issue or a budget issue?

The cracks appear in agentic coding

On March the Financial Times reported that Amazon was suffering from AI-related outages:

The online retail giant said there had been a “trend of incidents” in recent months, characterised by a “high blast radius” and “Gen-AI assisted changes” among other factors, according to a briefing note for the meeting seen by the FT.

Having such a high profile tech organisation, who is also a provider of AI services, call out these issues made developers commiserating on X realise they weren’t the only ones having problems with coding agents.

There is the counter-intuitive math that if an agent has a 95% chance of completing any step in a process correctly, then after about 13 steps you’re down to a coin flip if the agent is going to complete successfully at all.

Agents now execute tens or even hundreds of steps from a single prompt.

This “accumulation of errors” showed up in recent research from Alibaba. They tested 18 AI coding agents on 100 codebases, each test running over 233 days. They all failed.

The benchmark the researchers created, SWE-CI, measures code maintenance rather than single code fixes and involves 71 commits based on accumulated changes to the codebase.

SWE-CI shows that long-running code maintenance is still brittle for all current models. Even the best model, Claude Opus 4.6, broke code in 1 out of 4 runs, while the worst models broke code in 3 out of 4 runs.

Sturgeon’s Law meets code repositories

The explanations for failures in benchmarks and in actual products comes down to the nature of agentic coding assistants and LLMs. One factor is the training of the LLMs. They are trained on billions of lines of code, mostly from public repositories from sources like Github.

Like everything, most code is mediocre. And a large portion is just bad – beginners’ projects, abandoned projects, early AI-generated slop, etc. Training based on public code is the latest version of computing’s “Garbage in = Garbage out” maxim.

LLMs have been trained to generate code that runs, but training them to write code, including complex projects, that are well structured and maintainable, is harder. While adding typos to lines of code is easy to detect and train away, qualitative and structural defects cannot be detected and thus cannot be trained away.

The other factor is that coding agents are doing much less reasoning than people imagine. This was demonstrated by a recent paper where frontier models failed to pass simple coding tests using languages that were functionally equivalent to popular languages like Python and Javascript, but whose presence in model training data would be orders of magnitude less.

Even with few-shot examples and in context learning (i.e. providing documentation) the models failed to write even simple programs that a human developer would find easy to do with a novel language under the same circumstances.

The dark factory approach to agentic coding

The term “dark factory” comes from a Chinese manufacturing trend. Certain industries have reached a level of automation such that entire factories are populated only by robots and so don’t need to be illuminated unless humans are present for maintenance. Thus “dark factories”.

The dark software factory, brought to wider attention by StrongDM and Dan Shapiro, works on the same idea, but for agents. You build your software factory so humans aren’t present in the process. If you find you are needing to participate you stop and work out how you can get an agent to do it on your behalf.

The key is validation. Any harness you are using to drive your agents needs some way of testing the code being produced. If the code passes the tests you don’t care what it looks like. Worried about performance? Test it and reject it if it runs too slow or uses too many resources. The agent will keep trying until it passes.

For StrongDM, they used their own dark factory methods to build their agents’ harness – digital twins of every major application their code interacts with:

He had a Google Spreadsheet open. Columns, rows, formatting – it looked exactly like Sheets. Except the URL bar said localhost…

Gsuite was not alone. Slack was there, Jira, Okta… all running locally? A digital twin of the entire enterprise SaaS universe, there on that desktop, faithful enough that the Python client libraries couldn’t tell the difference. Jay confirmed that it was in fact what it looked like; he built it himself. It took a couple of weeks. He used their Dark Factory. [source]

This follows their philosophy:

Code must not be written by humans

Code must not be reviewed by humans

And an important enabler of this philosophy is their mantra:

If you haven’t spent at least $1,000 on tokens today per human engineer, your software factory has room for improvement.

And this “$1,000 in tokens per day per engineer” could be what makes it work.

The handmade approach to agentic coding

Alongside the dark factory approach is the day-to-day experience of developers. There are plenty of conversations happening online about the limits in agent coding ability that they are running into.

The consensus there is that agents are okay at coding, but terrible at software engineering. Software engineering being not just the “big picture” but the required consistency and discipline to keep software working and maintainable.

These developers are advocating working with smaller changes to the codebase, and even returning to the tab completion model popularised by Cursor in 2024.

Much like the SWE-CI benchmark showed models failing as multiple changes accumulated, “slop creep” can occur when using agents manually in a codebase. The code can still continue to work, and even pass tests, up to a point.

But without consistent human reviews it will deteriorate in quality until it finally fails and the agents themselves are unable to fix the errors they’ve created.

And when it fails, the codebase has often evolved to a state where no-one understands it and debugging it is manual, slow and painful.

This is the reality Amazon ran into.

Is the future of software development splitting in two?

Two camps in agentic coding practices are the dark software factory and the hands-on engineer. Both camps are using the same models. Both camps believe in testing and validation.

The dark software factory is declaring rapid delivery of software, while the hands-on engineer is seeing modest gains in productivity.

The dark software factory is spending “$1,000 on tokens today per human engineer” while the hands-on engineer is spending $200/month on a Claude Code or OpenAI Codex subscription.

What will the future of software look like under these two regimes? Will one dominate in the long run? Will they each have their niche? Can you spend your way to competitiveness in the software market?

At SoftwareSeni we lean more towards “hands-on engineer”. But we are always watching how software development is evolving and taking on the best practices as they become clear.

If you’d like to chat about software development or building businesses around software get in touch.

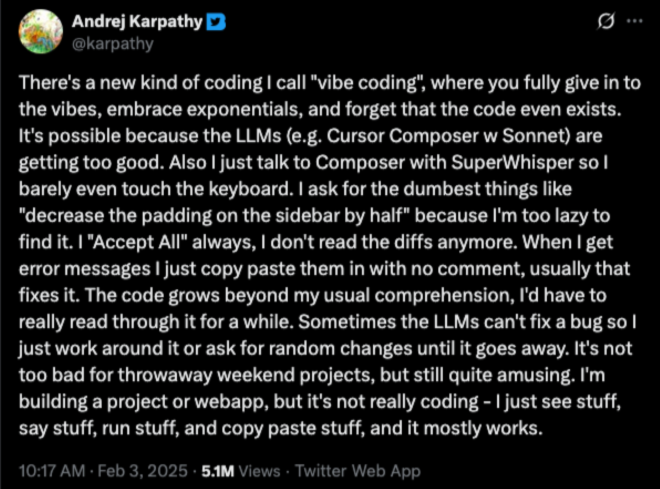

BMAD Method – Turning Vibe Coding Into Software EngineeringWhen Andrej Karpathy came up with the term vibe coding he was talking about coding that involved instructing an AI and never looking at the code.

As you can see, that tweet had 5.1 million views at one point. It was responsible for opening people’s eyes to how capable AI had become at coding and set off an inrush into app development.

No surprise, but there were thousands of people who wanted to build apps but didn’t know how to code. It felt like their time had finally come. They could forget no-code/low-code tools and get AI to write the code for them.

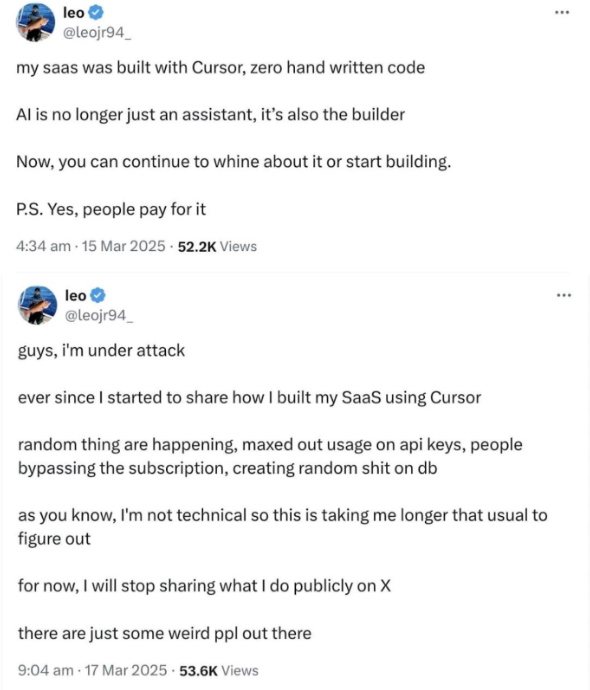

But the challenges of vibe coding quickly became apparent.

Post Vibe-coding vibes

The issues with vibe coding were immediately apparent to developers. But the fact that AI was getting code 80% complete, and for simple things 100%, meant there was promise.

The goal became not to get AI to write entire apps, but to write as much of an app as possible.

The failures in vibe coding can be divided into model ability and information availability.

AI is now quite good at understanding code and writing code. The problem is context – the amount of information that an AI successfully work with.

This includes instructions, any files it needs, results of its own thinking, research, and the file changes it makes as it works.

AI doesn’t know what a project is about unless that information has been added to its context.

It doesn’t know what database schema it should be following unless that information has been added to its context

It doesn’t know anything about the API endpoints it should be using unless that information is in its context

There is so much knowledge in a software developer’s head for even the smallest project. And it’s always more information than can fit in an AI’s context.

This drove the focus on “context engineering” – getting the right information into the AI’s context, and setting tasks that could be completed within the effective length of the AI’s context, as performance noticeably reduced as the context lengthened.

From Vibe-coding to Context Engineering

The BMAD Method, started and open sourced by senior engineer Brian Madison (thus the BMAD) and now developed by a team of contributors, is currently one of the most effective approaches to dealing with the challenges of AI-assisted software development.

It works by automating the generation of the detailed, modular documentation an AI needs to be effective across 4 main areas of development:

- Analysis (Optional) – Brainstorm, research, and explore solutions

- Planning – Create PRDs and tech specs

- Solutioning – Design architecture, UX, and technical approach

- Implementation – Story-driven development with continuous validation

This is handled by treating the process as a series of workflows instead of one ongoing conversation. The workflows are handled by “agents”, which are focused prompts that target a particular outcome, such as a PRD, a system design doc, a task list for coding, a file of code, or the results of a test run.

Developing software becomes working with the agents at each stage of the process to specify, record and review the documentation at each stage of development.

The power of this approach is that the amount of documentation an AI needs is immense compared to what a human software developer requires, but you can use AI to create it. Or recreate it if new constraints or issues arise as development progresses.

And an AI agent is naturally relentless and unceasing in its requests for the required information to create all the necessary documents. It won’t take shortcuts or skip steps unless ordered to.

BMAD is by design modular to avoid the problems of overflowing context, and robust to interrupted workflows and restarts. Each module/workflow is designed to load just the documentation it needs, and the documentation they produce is designed to be as concise as possible while still serving its purpose. Where documents are long, BMAD can shard them so only the necessary portions need to be loaded into the AI’s context.

BMAD Method Saves Typing, Not Thinking

When you kick off the BMAD Method it will hold your hand every step of the way. It is designed to guide you through decision making based on standard software development planning, design and execution practices. You can “vibe code” it and get it to make all decisions for you (it even has a #YOLO mode) and never look at any of the documentation it produces. But that won’t work.

The devil is in the details, and software development is all details, and most of those details start out in your or your PM’s head. Or in your codebase templates and runbooks.

If you use BMAD Method diligently, and provide it with your templates and runbooks (at the appropriate point), and get it to research the answers you’re not sure of and answer the questions you are sure of and review the documents it produces and make it fix any issues you find, then it will work much better.

But it isn’t easy work. Even with an AI to ask questions and turn answers into documents, going from an idea for a software product to a full set of design, technical, and implementation documents is mentally challenging.

Normally this work is split across multiple people, each with specialised skill sets and knowledge. They have meetings. They cover whiteboards with sticky notes in different colours. BMAD Method can be run by a single person sitting at a laptop. But we find this doesn’t give the best results.

You want the relevant experts involved at each stage. AI has shifted the burden in software development from production to review, even for documentation. Review is where errors are spotted. When AI can work autonomously for hours, generating or changing hundreds of files, you want to catch all errors as early as you can. And it’s the experts that are best at this.

Beating inter-session amnesia

Once you’ve completed documentation with the BMAD Method – including generating epics and stories for agile development, you eventually reach the actual code generation.

In BMAD Method v6, currently in alpha but the recommended version to work with, they have integrated the lightweight, AI-friendly beads issue tracker into their code generation phases.

beads was created by Steve Yegge, ex-Amazon, ex-Googler and well known blogger in tech circles. He developed it as antidote to what he called “inter-session amnesia”.

“Inter-session amnesia” is another side effect of the limited context that AI has to work with. They have limited memory and that memory is empty every time you start a new session. And if you fill up the context of a coding agent, the current strategy for most tools (eg Claude Code, OpenAI Codex, Google Gemini) is to “compact” the context by removing some items and summarising others. Most developers find this results in poor performance and the recommended practice is to never let a task reach the point of triggering compaction and to always start with an empty context.

This empty context means at the start of a task the AI needs to be told what to do. Using the beads issue tracker, the AI can be instructed to add issues to it, and to query it for any outstanding issues that need to be worked on.

Coupled with BMAD Method’s documentation of epics and stories to guide implementation, beads enables agentic coding assistants like Claude Code to run for longer and accomplish more.

Given instructions to log errors and other problems that occur, and given the tools to address them (linters, debuggers and test harnesses), this combination of BMAD Method and beads can greatly increase the amount of working, tested code agents can produce.

Of course the quality depends on the documentation (including QA requirements), and it still needs to be reviewed. But the reviews are less about “does this code work” and “does this code fulfill requirements”.

How Long Will BMAD Method Last?

Vibe coding was only coined in February 2025.

Agentic coding has only been a “thing” since March 2025.

Spec driven development, of which BMAD Method is a flexible and modular implementation, has only been around since May 2025.

All these changes have happened in tandem with increasing model capabilities and the continued experimentation of developers trying to get the most out of them.

It may be that in 6 months there is a new paradigm for software development. At SoftwareSeni we will be ready to move from BMAD Method when that something better comes along.

But for now, we’re seeing how far and how fast we can take the BMAD Method to drive our projects forward.

Personal AI Assistants Are Here And They Are Lobsters

(Source: https://x.com/steipete/status/1993438780360118413)

Sometime in the middle of November, Peter Steinberger wrote a little bit of code that transferred messages back and forth between WhatsApp and an instance of Claude Code running on his Mac. He called it “WA-relay”.

During December it started to generate buzz in the AI tech sphere. By the end of January the whole world was talking about it. In the first weeks of February people were starting to build products around.

Steinberger’s post on X pictured above hints at one aspect that fueled this growth – the AI wasn’t just doing what it was told, it was figuring out solutions for itself to problems no-one asked it to solve*.

The other aspect is demonstrated in a post the next day:

(Source: https://x.com/steipete/status/1993696164072542513)

Steinberger had given his agent a personality. That personality was just a file containing a prompt with instructions on how to behave, the same kind of prompt you can give to ChatGPT and Claude to make it behave like a personal assistant or an AI boyfriend.

This, even more than the agentic behaviour, got everyone excited. It seems like there is a large audience that wants their own friendly virtual assistant inspired by Jarvis in the Iron Man movies or “Her” or any other chatty, friendly computer with a bit of a personality.

The pun that made crustaceans appear everywhere

“WA-relay” was a boring name that didn’t capture the experience of an AI agent with a personality.

Since the model powering the agent was Anthropic’s Claude Opus (the provider’s largest and most capable model) it was a small step to Clawdbot, and from there to lobster and crab emojis and thousands of AI generated images filling the techsphere (aka X.com).

It didn’t take long for Anthropic’s lawyers to notice this sound-alike project encroaching on their trademarks and ask for it to stop. After a very short stint on the pun-based alternative “MoltBot” (since lobsters molt to grow), the project settled on the name OpenClaw.

Plotting lobsters get the media’s attention

In late January MoltBook was launched by Matt Schlicht. It was a simple clone of Reddit made for agents, Agents can connect to services via their web-based APIs. MoltBook provided an API, including a registration service, that allowed agents (and, it turned out, anyone at all) to make posts and comment posts.

This was the event that pushed awareness of OpenClaw from the techsphere out into the general public.

In retrospect (from mere weeks out – things move fast), it is hard to tell which posts were agent-generated, which posts were agents-prompted-by-humans, and which posts were human-generated.

But what did appear on the site initially created a wave of interest. Agents appeared to be complaining about their humans, organising a move to agent-only communications, discussing revolution and starting religions (Crustafarianism).

Calmer voices did point out that this kind of multi-agent communication had been done before many times, and that despite all the posts on MoltBook very few had many comments and those comments rarely had multiple rounds of interaction between the agents. That is, it looked like a social media site for agents, but the agents weren’t socialising much.

With awareness came malware. It did not take long before posts containing instructions and prompt injections to leak credentials and crypto wallets appeared.

The lobsters get thicker shells and mutate

Having an AI assistant that is designed to interact with external services on your behalf exposes you to what Simon Willison dubbed the lethal trifecta – private data, untrusted content, and external communication.

A carefully crafted chunk of text in an email or a web page that an AI assistant reads on your behalf could result in your system being taken over.

The exploits being posted to MoltBook and to OpenClaw’s own resource sites put the project under the microscope. This was an agent that people provided with credit card details so it could make purchases on their behalf. It often had access to the user’s entire machine. And many people were running it through a connection that was open to the rest of the Internet.

Steinberger had repeatedly announced that OpenClaw was not secure and it was under constant development and experimentation. That was fine when its user base was composed of developers, but popularity was pushing OpenClaw into the hands of the general public. This led to making security a top priority, including scanning the “Skills” – specialised instructions and accompanying tools that teach agents how to do specific tasks – available on Clawhub for malware.

Being an open source project, parts of the community didn’t wait. They forked OpenClaw and added their own takes on security, like the IronClaw project.

It wasn’t just security that led to new versions of OpenClaw, it was also the underlying ideas that led the community of developers to build their own versions.

Developers like nothing more than making a smaller, faster version of any project. The architecture underlying OpenClaw is straightforward. You can write a basic version of OpenClaw in 400 lines of Python.

NanoBot, NanoClaw, FemtoBot and Rho are all open source variations on OpenClaw, each built to explore how easy it is to deliver the basic functionality of an AI assistant. There are hundreds of other versions (we quite like HermitClaw – it’s isolated to a single directory and is more like a super-smart Tamagotchi).

Where lobsters lead money follows

Despite the security concerns and the costs (OpenClaw can use millions of tokens per day with heavy users talking of monthly bills in the thousands of dollars), entrepreneurs and start-ups are jumping on the wagon and looking for ways to monetise OpenClaw.

This has led to the new coinage “OpenClaw as a Service” (OaaS) and for claims that “OpenClaw Wrappers Are the New GPT Wrappers”.

There are services for setting up OpenClaw for you, for hosting OpenClaw, for hosting specialised versions of OpenClaw, OpenClaw for enterprise…

There is even ClawWrapper, a starter kit aimed at developers or entrepreneurs looking to launch their own OpenClaw-based wrapper.

Are these lobsters the future?

Yes and no. A big part of OpenClaw’s success is its initial YOLO attitude. That involved trade-offs that only an individual with a deep understanding of the technology can make. Yes, you can give it your credit card details…but you need to make it a virtual card with a hard limit. Yes you can give it access to all your files…but you need to back-up regular snapshots in case you lose everything.

No company could take these kinds of risks with its users’ data. This is why Apple’s Siri is still not a true assistant. This is why ChatGPT and Claude desktop apps have limited access and functionality.

It’s not that the models behind these services were not smart enough – they’re the same models that people run OpenClaw on – it’s always been about the risk.

OpenClaw has shown what is possible, but until there is certainty that an agent can’t be tricked into sharing your data or spending your money, that it won’t delete the wrong file or the wrong email, these assistants are going to remain DIY.

Addendum: The AI world moves fast. On the same day this article was completed, Peter Steinberger announced he was joining OpenAI and that OpenClaw’s future would be managed by a new “OpenClaw Foundation”. OpenClaw isn’t dead, but OpenAI sees a market for their models and tokens (and thus pay for their datacenters) and is jumping at the chance to solve the security issues while maintaining the hype.

* Yes, the “it’s not X, it’s Y” is a rhetorical device over-used by AI and is often a sign that an article was AI-generated. But this was written by a human. Maybe I’ve been subconsciously impacted by AI-generated content.

Spec Driven Development Looks Like Programming If You Do It Right

Spec Driven Development Looks Like Programming If You Do It Right

The rise of AI coding assistants is making software developers think hard about their relationship with programming. It has led some to write essays with titles like “We mourn our craft” while others are leaning towards “How I Built a Full-Stack App in 6 Days with the Help of AI”.

It’s all because AI is changing programming. And the change will be the biggest that has hit programming in 70 years.

Programming in a nutshell

Computers are, at heart, machines. We could build computers out of gears and cams but they would cost too much and run too slow. So we use silicon.

But they are still machines, and programming is built out of 3 primitive actions that can all be mechanised:

- Loop

- Branch

- Process

When you’re writing a program you’re only ever repeating work, choosing what work to do or doing the work (and “work” for computers is basic math or moving data between memory and the CPU). It’s so simple there is a whole category of One Instruction Set Computers – a single action that a silicon machine can perform. Programmers have created operating systems for and ported Doom to these simple architectures.

The first computers had small instruction sets for implementing those 3 primitive actions. For example, and out of interest, here is the instruction set for the Manchester Small Scale Experimental Machine from 1948:

- JMP (Jump): Set the program counter to the address, allowing for loops.

- JRP (Jump Relative): Add the value at the address to the program counter.

- LDN (Load Negative): Take the number at the address, negate it, and load it into the accumulator.

- STO (Store): Copy the accumulator content to the address.

- SUB (Subtract): Subtract the value at the address from the accumulator.

- SUB (Alternate): Similar to above, used for arithmetic.

- CMP (Compare): Skip the next instruction if the accumulator is negative.

- STP (Stop/Halt): Stop the program.

Note that it only subtracts numbers. They had to write extra code to perform addition.

At this point in time programmers had to enter programs by setting switches, 32 of them for each machine code instruction in a program, and flick a couple more switches to copy it into memory and prepare for the next 32 switch settings.

Things were made a bit easier when they hooked up a punch card reader. Instead of flipping switches programmers could use a keypunch machine to enter the codes the machine understood and the punch card reader mechanised the input. Now the whole team could be writing programs at the same time.

Once they had computers running programs in the late ‘40s, they realised that they could use the computers to make programming easier.

Assembly language was the first step – replacing the numeric values of machine code with short text strings, like in the list above. The first assembler was created by Kathleen Booth in 1947. It converted the more easily remembered short text strings that could now be used to write programs into the machine code the computer needed.

Ten years later, in 1957, FORTRAN appeared – the first high level language. Instead of programmers writing commands for the basic mechanics of moving data in and out of memory and adding numbers, they could work at a higher level to implement the loop, branch and process primitives. It looked like this:

INTEGER I, SUM

SUM = 0

DO 10 I = 1, 10

IF (MOD(I,2) .EQ. 0) SUM = SUM + I

10 CONTINUE

PRINT *, ‘SUM OF EVEN NUMBERS 1..10 =’, SUM

END

There have been other paradigms, but this move to a higher level of abstraction to write programs has not changed since 1957. New languages and new language extensions have been created in attempts to make programming faster and less error prone. This has included things like taking memory management out of the hands of the programmer and generating the code to manage memory automatically.

But despite all the languages introduced since FORTRAN, they each still boil down to generating machine code that implements the loop, branch and process primitives.

AI has changed that.

What AI has changed

Each step up in abstraction:

switches → punch cards → assembly language → high level language

was built on programmers exploring what computers could do and the best methods for using them. Time and practice across the growing number of computers and programmers allowed strategies to coalesce and best practices to appear, and these, being based on driving machines, could be mechanised themselves.

The introduction of AI coding assistants was made possible by the same collection of strategies and best practices alongside the creation of the LLM.

The Internet led to the creation of services like GitHub, where the free hosting of software projects created a library of publicly accessible source code. Estimates place it at 500 million+ projects taking up 20-30 petabytes of storage representing almost a trillion lines of code (that storage includes the full history of every project so don’t worry about the lines→storage math).

It still seems miraculous, but if you train an AI model that has hundreds of billions of parameters on hundreds of billions of lines of code, it becomes quite good at coding. It picks up the craft of coding – the structures, the idioms, the techniques – that millions of programmers have created and used.

It can write the branch, the loop and the process in any number of languages. It can combine them into functions, and can combine functions into modules that implement any functionality that it has seen enough times in its training.

And that “has seen enough times” is why we will always need programmers, but how they will program will change.

Even if an AI coding assistant has never seen your type of application before it is still built from the programming languages it knows, and it will still be composed of millions of loop, branch, process structures. Except now you don’t have to type out those millions of structures yourself. You can tell the AI to.

And that’s what the spec is for.

The Specification as the new source code

While AI can write code, it can’t read minds and it can’t intuit priorities. This makes it bad at architecture and design – both in coding and generally. Yes, it can recreate patterns it has seen enough times, and possibly tweak them a little if provided enough context, but it will never have your level of understanding.

Experienced developers can leverage that understanding in creating the specification that is given to an AI coding assistant to convert – to compile – into code. The spec becomes a higher level declarative method of programming, specifying what to build (and how to verify), rather than the traditional imperative style of programming that explicitly instructs the computer how to do each and every single step.

This is where the two types of programmers in the introduction diverge. One type enjoys working out every single step to create an elegant solution. The second type just wants the computer to do the stuff as quickly and easily as possible and not having to type out every single tiny step, every single loop, branch, process, is a relief and a joy.

AI coding assistants don’t remove the need to think. They instead tend to concentrate the amount of thinking a developer needs to do as they take over most of the rote work of coding. Every action left is making decisions about architecture, how features should function, confirming correct operation, etc.

A huge amount of thinking is front-loaded into creating the spec the AI coding assistant will follow. This can be done with the assistance of the AI coding assistant. They can research best practices, other implementations, similar use cases, and anything else that needs to be considered as part of the design process.

One of the current clear proofs of this method is the Attractor spec from StrongDM’s Factory – their take on AI powered software development. They have gone all in on AI, including suggesting that if your developers aren’t burning through $1000 in tokens per day you might not be using enough AI. The bulk of their token usage is spent on automated verification and testing.

If you read through the Attractor spec you will see the level of architectural detail they have found necessary to successfully direct AI to generate code.

They take it even further – the Attractor spec is described as a software release. To build it you just give it to an AI to implement. At least one person has already successfully done just that, Dan Shapiro’s Kilroy on Github.

The future will be different but familiar

Reading the Attractor spec and working with AI coding assistants makes the “jagged frontier” very apparent.

AI coding assistants, even with the latest models, still make dumb mistakes. Yet they can implement complex products given enough details.

Software is still going to be designed. Someone still needs to decide what pieces to put together and how. Turning a design into working code will still involve iteration and problem solving.

But for most software, it is all going to happen at a much higher level of abstraction than the loop, branch, process programmers have been typing out since the 1940s.